The CFO’s AI readiness report: Part 2.

The second report in our AI readiness series examines what it takes to scale AI in finance. Even among self-identified AI leaders, only 26% have the full operating stack in place. While many have invested in skills and budget, governance and data readiness often lag. The key takeaway: AI scales in finance only when it runs within controls, with clear rules, usable data, and accountability. Learn why scaling AI depends on governability, not just adoption

- The finance AI operating stack

- Even AI leaders are not uniformly ready

- The market constraint is not literacy, it’s governability

- The hidden risk among leaders: rules debt

- Data readiness is a scaling gate

- Full-stack readiness exists, but it’s the exception

- What this implies for how AI will scale next

- Practical guidance for CFOs: building the minimum stack

- Why segmentation matters next

By submitting this form, you agree to receive emails about our products and services per our Privacy Policy.

Even among organizations that self-identify as AI leaders, only 26% have the full set of operating conditions needed to scale AI in finance.

This helps explain the current market pattern: pilots are common, but durable scale remains rare. In finance, scale is not defined by how quickly AI can generate outputs, but whether those outputs can pass through approvals, exceptions, and audit scrutiny without weakening accountability.

This report (the second in our four-part AI readiness series) examines the five conditions that determine whether AI scales within finance, drawing on data from our research with over 1,500 Business and Finance Leaders. For this analysis, we focus on ‘AI leaders’, defined as respondents who rated their organization 7–10 on a 1–10 self-assessed AI maturity scale (n=405).

The finance AI operating stack

Across industries, AI can often be trialled with minimal preparation. A team buys a tool, runs a proof-of-concept, and demonstrates time savings.

And even finance is rarely blocked at the pilot stage. It gets blocked the moment AI moves closer to the realm of governed decision-making.

Our research reveals five core requirements that determine whether AI can scale inside finance, not just be experimented with. These represent the minimum conditions that leaders recognize as necessary for AI to withstand scrutiny.

The five requirements are:

Execution measures in place: “We've already implemented measures for AI integration.”

In practice: named use-case owners, success metrics, rollout cadence, and adoption tracking.

Minimum rules established: “Our organization has established minimum rules for AI use.”

In practice: what AI is allowed to do, when it must escalate, what gets logged, and who owns the outcome.

Skills and tools available: “Employees have the skills and tools to implement AI effectively.”

In practice: tool access, training, and the ability to build, test, and support AI in production.

Budget committed: “There is investment allocated for AI initiatives.”

In practice: funded roadmap, resourcing (people/time), and budget for data/integration and change management.

Data usable for AI analytics: “Our data can support AI-driven analytics effectively.”

In practice: consistent master data, reliable integrations, and outputs that reconcile back to systems of record.

Each item was rated on a 1–7 agreement scale. For consistency, we treat scores of 6–7 as “strongly in place.”

Together, these five elements form what we call the finance AI operating stack: the foundation that determines whether AI can move from adoption to durable execution in finance.

As Konstantin describes, “AI in finance doesn’t scale because the model improves. It scales when the controls, ownership, and data foundations are strong enough to carry it.”

Konstantin Dzhengozov

“AI in finance doesn’t scale because the model improves. It scales when the controls, ownership, and data foundations are strong enough to carry it.”

Even AI leaders are not uniformly ready

If AI maturity were simply a question of ambition or experimentation, we would expect organizations that call themselves “leaders” to look broadly prepared across the board.

But finance does not scale AI on intent alone. Scaling requires an operating foundation: rules, execution discipline, usable data, and governance that can hold up once AI moves into real workflows.

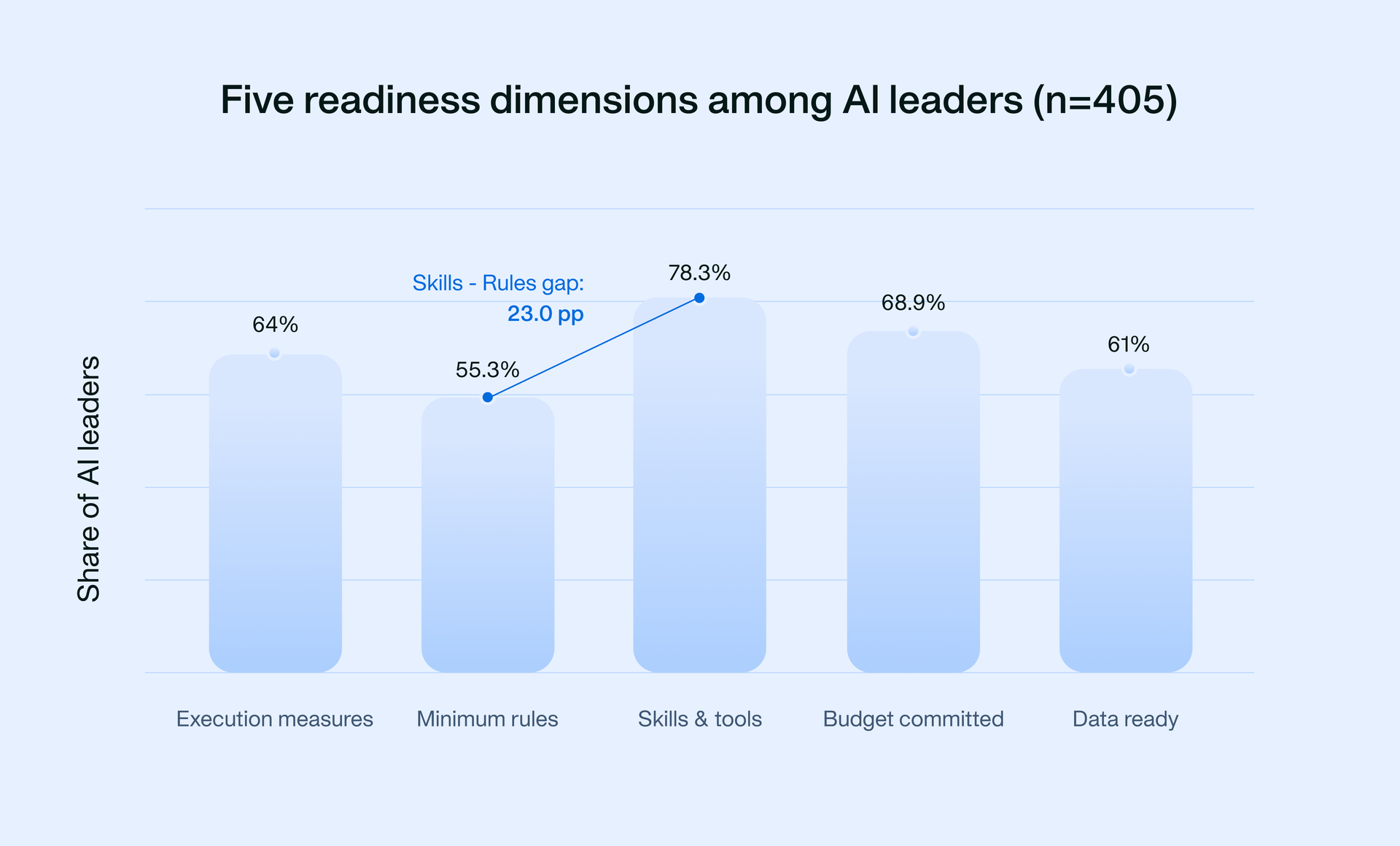

Figure 3 shows that even among the most mature organizations in our sample, readiness is uneven. Leaders tend to build capability first, and only later formalise the guardrails needed for durable scale.

This gap helps explain why many finance teams can demonstrate AI value in pilots, but struggle to operationalize it across the workflows that matter most.

Top-2 agreement rates across five scaling requirements among AI leaders (n=405)

The results show a clear ordering in what finance leaders have in place first: Capability and investment come earlier (think tools, etc), while governance and rules lag behind.

- Skills and tools: 78%

- Budget committed: 69%

- Execution measures in place: 64%

- Data usable: 61%

- Minimum rules: 55%

Key takeaway

AI maturity isn’t a ladder of use cases. It’s delegation readiness: the ability to move work away from humans without breaking accountability.

The market constraint is not literacy, it’s governability

In this report, governability means AI can operate inside finance controls: clear boundaries on what it can do, escalation on exceptions, logging by default, and a named owner accountable for outcomes.

Much of the public AI narrative assumes finance adoption is slow because teams lack skills or comfort. But the data suggests a more specific reality.

Among leaders, skills and tools are the strongest dimension (78% Top-2).

Minimum rules are the weakest (55% Top-2).

That gap matters because finance AI isn’t simply a productivity layer. The moment AI influences approvals, classifications, spend decisions, or audit evidence, governance becomes the gating factor.

The constraint is shifting from:

- “Can we use AI?” to

- “Can we use AI in a way we can defend as finance leaders?”

As Konstantin explains:

Finance teams are getting stuck because nobody can answer, clearly, who owns the output. Yes, the technology is powerful, but that alone can’t drive results for finance leaders who work under explicit audit, policy, and delegation constraints.

This is where scaling diverges, and it shows up first in whether minimum rules are in place.

The hidden risk among leaders: rules debt

Among AI leaders, skills are rarely the constraint, but rules usually are. In our data, 32.1% of leaders rate skills as strongly in place but do not have minimum rules in place, and 21.7% report strong execution measures without minimum rules.

That gap is rules debt: AI usage expands faster than permissioning, escalation pathways, logging, and ownership are defined. Early gains can mask the issue, but it becomes visible when AI outputs enter approval flows, trigger exceptions, or face audit scrutiny, and the organization cannot clearly evidence what the system was allowed to do.

We go deeper into rules debt in Part 3. For now, the message is simple: if you can’t state what AI is allowed to do and who owns the outcome, you’re not ready to scale it.

Data readiness is a scaling gate

The second hard gate is data usability. Only 61% of leaders strongly agree that their data can support AI-driven analytics effectively.

When master data is inconsistent, and mappings break across entities, AI outputs can’t be reconciled back to trusted financial records. That’s data debt: the organization has intent and capability, but the data foundation can’t carry AI into operational workflows.

We unpack data debt in Part 3. The takeaway here is straightforward: if outputs can’t be tied back to a source of truth, you can’t operationalize AI in finance.

Full-stack readiness exists, but it’s the exception

A natural question emerges after Figure 3:

How many leaders have all five requirements strongly in place?

The answer: Only 26% of AI leaders score Top-2 across all five dimensions.

This is a critical nuance as it proves that full-stack readiness is real in the market today. But it’s not the default posture, even among leaders.

So two things can both be true:

- AI is here

- AI is scalable in finance

But still, scalable finance AI is a minority operating condition. Most organizations are leading in parts, not in full-stack operational readiness.

What this implies for how AI will scale next

The five dimensions covered above imply a specific trajectory for the adoption of finance AI.

AI will scale first where:

- The rules requirements are lighter

- Data demands are narrower

- The cost of error is manageable

That means bounded, assistive workflows before core decision workflows.

Scaling into close, controls, approvals, and governance will depend less on model performance and more on minimum operating design catching up.

Konstantin adds:

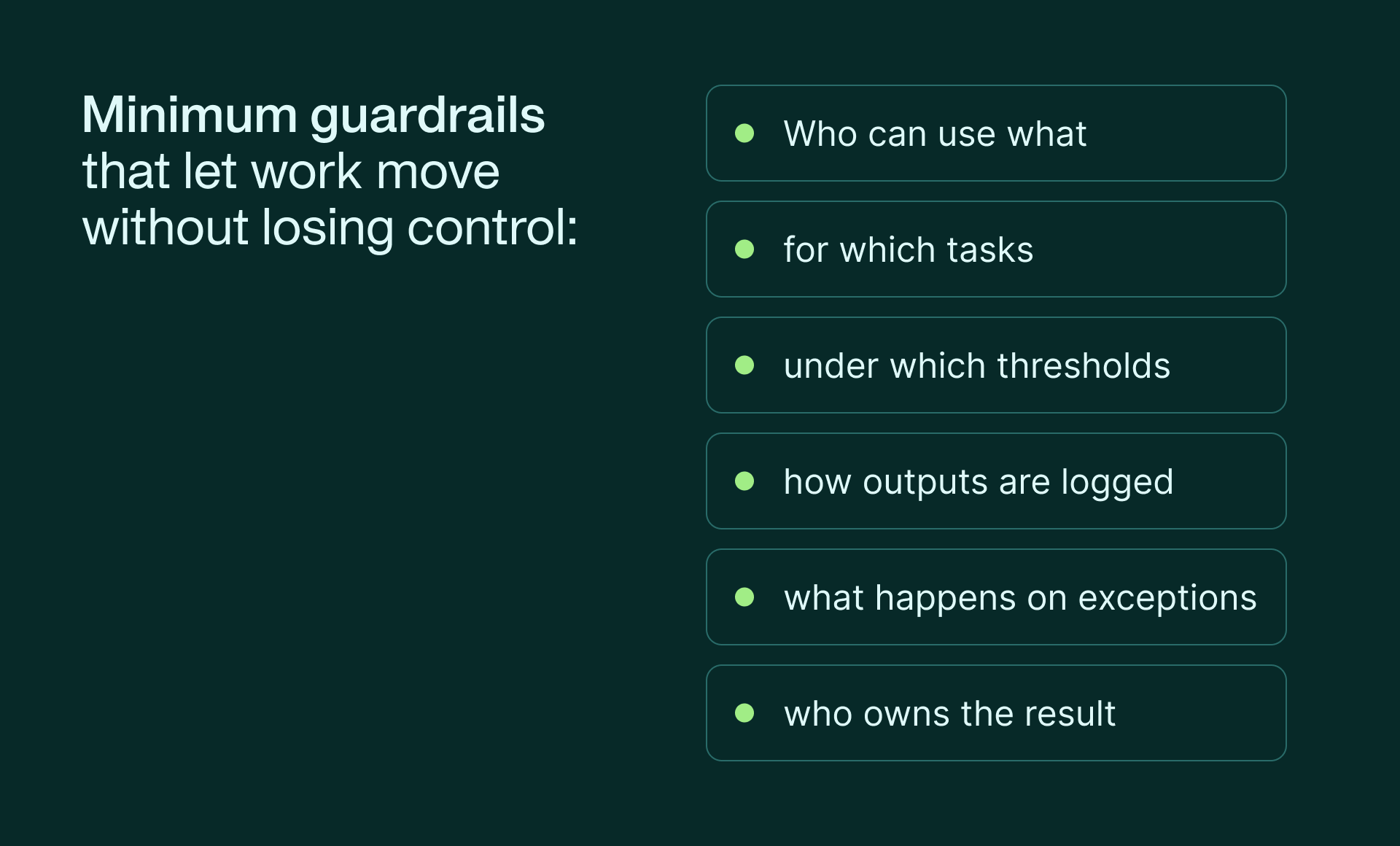

Finance doesn’t need a governance empire. It needs minimum enforceable guardrails that let work move without losing control!

See minimum guardrails below:

Data foundations will determine whether AI becomes operational or remains a side layer.

Without reliable integration and clean data, AI stays assistive. With it, AI becomes part of the finance operating model.

Practical guidance for CFOs: building the minimum stack

For finance leaders, the takeaway isn’t that AI leaders are failing.

It’s that scaling requires deliberate operating design.

A 30-second diagnostic for CFOs

Pick one workflow where AI already influences a finance decision (directly or indirectly). If you cannot evidence the following, you are not ready to scale that workflow:

- what AI is permitted to do (by role, task, and threshold)

- how exceptions are escalated and resolved

- what is logged by default to support audit scrutiny

- who is accountable for the outcome

- how the output reconciles back to systems of record

In practice, scaling stalls when one of these is missing, even if pilots look successful.

Orchestrate finance with ease & efficiency: Meet the agents

Why segmentation matters next

Figure 3 revealed that AI leaders aren’t uniform. Some have the full operating stack in place. Others are execution-led but rules-light, or governance-forward but constrained by data.

Clearly, “AI leader” isn’t one category. That’s why our third report in the series explores two things: the forms of operational debt that quietly block scale even at the top end of maturity (namely, rules debt and data debt), and the distinct operating postures finance teams fall into as a result.

Because the next phase of finance AI won’t be defined by adoption. It will be defined by which operating stacks can actually hold up under scrutiny.

In the meantime, if you want to see what governable AI looks like in practice, explore how Payhawk enables genuine finance orchestration with native AI built directly into spend workflows, supported by built-in controls, audit trails, and accountability by design. You’ll see concrete examples of delegation that strengthen, rather than weaken, approvals, policy enforcement, and audit readiness.

Methodology:

Using affirmative statements developed in close collaboration with finance and business leaders, IResearch conducted interviews across eight countries to reflect genuine operational realities and challenges. Respondents: 1,500.

Coverage included:

- Regions: DACH, EU, Spain, France, Benelux, UK & Ireland, United States

- Seniority: C-suite, VPs, Directors, and senior individual contributors

- Functions: Finance, Accounting, Sales, HR, Procurement

- Industries: Services, Digital, Manufacturing, Healthcare, Education & Non-profit, B2C

- Company size: 50–100 FTE, 101–250 FTE, 251–500 FTE, 501–1,000 FTE, and 1,000+ FTE)